This CE Center article is no longer eligible for receiving credits.

As consumers, we are all acutely aware of the ongoing revolution in computer technology. The gadgets that are increasingly merging with our personal lives—including laptops, tablets, and mobile phones—keep getting smaller, lighter, and more powerful. Yet there is an equally significant revolution going on behind the scenes: In recent years, the information-technology (IT) industry has been working aggressively to reduce the sizable environmental footprint of data centers—the facilities that process and store all the content that consumers, businesses, and institutions increasingly rely on for both work and pleasure. “A sea change began over five years ago in data centers that is significantly lowering their electricity usage,” reports Peter Rumsey, West Coast managing director of Integral Group, a collaboration of engineers specializing in the design of cost-effective systems for high-performance buildings.

And the effort comes not a moment too soon, for while technological advances in recent years have resulted in microchips that compute significantly more per unit of energy, this increase in processing efficiency has been dwarfed by society's voracious appetite for computation—whether in the form of email, texts, tweets, video downloads, or actual number-crunching. According to a report to the U.S. Congress published by the U.S. Environmental Protection Agency (EPA) Energy Star Program in August 2007, the amount of electricity consumed by data centers nationwide more than doubled from 2000 to 2006. Although the rise has not been quite as steep since 2007—primarily due to ongoing improvements in efficiency, plus the general economic malaise—the rate of increase is still greater than within any other industry sector.

Given how much value is placed on connectivity in the IT world, it is ironic that so much of data centers' energy inefficiencies can be traced back to major disconnects between different sectors of the industry. For example, for years, IT spaces—whether they're computer-server rooms within larger office buildings or full-fledged stand-alone facilities—have been treated as rarefied zones that required low temperatures and a narrow range of humidity levels and that could not tolerate outdoor air.

|

|

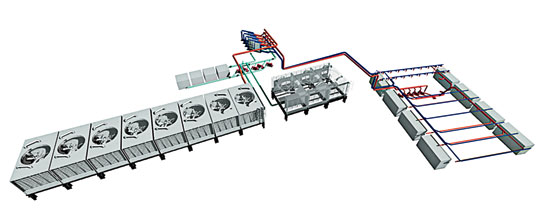

Conventional data centers consume massive amounts of energy to power and cool servers.

Photo © Istock Photo

|

As consumers, we are all acutely aware of the ongoing revolution in computer technology. The gadgets that are increasingly merging with our personal lives—including laptops, tablets, and mobile phones—keep getting smaller, lighter, and more powerful. Yet there is an equally significant revolution going on behind the scenes: In recent years, the information-technology (IT) industry has been working aggressively to reduce the sizable environmental footprint of data centers—the facilities that process and store all the content that consumers, businesses, and institutions increasingly rely on for both work and pleasure. “A sea change began over five years ago in data centers that is significantly lowering their electricity usage,” reports Peter Rumsey, West Coast managing director of Integral Group, a collaboration of engineers specializing in the design of cost-effective systems for high-performance buildings.

And the effort comes not a moment too soon, for while technological advances in recent years have resulted in microchips that compute significantly more per unit of energy, this increase in processing efficiency has been dwarfed by society's voracious appetite for computation—whether in the form of email, texts, tweets, video downloads, or actual number-crunching. According to a report to the U.S. Congress published by the U.S. Environmental Protection Agency (EPA) Energy Star Program in August 2007, the amount of electricity consumed by data centers nationwide more than doubled from 2000 to 2006. Although the rise has not been quite as steep since 2007—primarily due to ongoing improvements in efficiency, plus the general economic malaise—the rate of increase is still greater than within any other industry sector.

Given how much value is placed on connectivity in the IT world, it is ironic that so much of data centers' energy inefficiencies can be traced back to major disconnects between different sectors of the industry. For example, for years, IT spaces—whether they're computer-server rooms within larger office buildings or full-fledged stand-alone facilities—have been treated as rarefied zones that required low temperatures and a narrow range of humidity levels and that could not tolerate outdoor air.

|

|

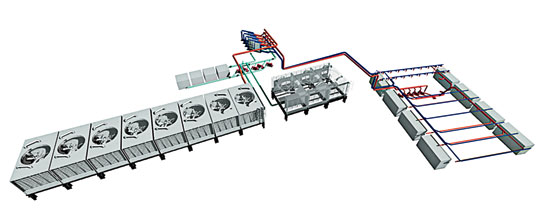

Conventional data centers consume massive amounts of energy to power and cool servers.

Photo © Istock Photo

|

Furthermore, staff within data-centric companies typically worked in departmental silos: IT personnel focused on purchasing and running equipment to obtain the highest capacity and reliability for their customers, paying little heed to how their decisions were affecting the facility department's budget and the company's overall bottom line. According to Rumsey, the energy to power a conventional 10- to 20-megawatt commercial-grade data center could run $10 million to $20 million per year. Over five years—which is about the expected life span of IT equipment—this amounts to the cost of the hardware itself. “People are finally waking up,” says Rumsey. “The breakthrough came in the last three to five years, when IT staff finally listened to facility operators.”

Holistic Approach

Once the problem was acknowledged, the industry began seriously tackling it in a much more holistic way because it turns out every component and process—from power source and data management to mechanical systems—becomes fair game when trying to reduce energy consumption while maintaining the capacity and reliability on which customers increasingly depend.

|

|

Facebook’s LEED Gold data center in Prineville, Oregon, has an innovative cooling system to reduce overall energy use.

Photo © Jonnu Singleton Photography

|

And the reexamination has been intense in both the private and public sectors. Industry giants like Facebook and Yahoo! have reconceived their stand-alone data centers from the ground up. Building-standards organizations like the American Society of Heating, Refrigerating, and Air-Conditioning Engineers (ASHRAE) have established—and periodically revise—their recommendations for this building type, while IT-focused consortiums like the Green Grid and the Open Compute Project Foundation have sprung up to share ideas and disseminate best practices. Acknowledging the unique challenges of data centers, the U.S. Green Building Council has incorporated specific requirements for existing and new data centers as a separate building type in its current draft version of LEED v4. And both the EPA and the U.S. Department of Energy have ramped up their programs on data-center efficiency.

Unique Program

The primary purpose of a data center is to house, operate, and maintain information-technology equipment. By and large, this equipment runs 24/7 year-round. In doing so, a conventional facility “can consume 100 to 200 times as much electricity [per square foot] as standard office spaces,” according to High Performance Data Centers, a design-guidelines sourcebook written by staff at Integral Group and Lawrence Berkeley National Laboratory (LBNL) and published by Pacific Gas and Electric Company (PG&E) in January 2011.

|

|

Chart by David Foster

|

Although alternative, more energy-efficient configurations do exist, electricity entering a data-center facility in the United States typically goes through an uninterruptible power supply (UPS), which conditions the electricity to smooth out power spikes and serves as a battery backup in case the utility grid experiences a disruption in power. From the UPS it flows into power-distribution units (PDU), then onto IT equipment, which are often generically referred to as “servers.” A single server—which measures about 19 inches wide and 20 inches deep, and no less than 1.75 inches in height—typically performs one function, for which it is named. Examples include database servers, file servers, mail servers, and Web servers.

Multiple servers are mounted one above the other on open racks about 6 feet tall. While small offices may have just a server closet with one rack and larger offices a server room with several racks, stand-alone enterprise data centers like those for Facebook and Yahoo! have halls with hundreds of racks lined up, row after row.

|

|

FACEBOOK’s data center in Prineville, Oregon, is a LEED Gold facility designed as one large evaporative cooler, in which fans blow air through wet filters, cooling and humidifying the air before it reaches the servers.

Photo © Jonnu Singleton Photography

|

Because reliability is such a critical aspect in the computing world, data centers typically build in various redundancies—including extra UPS equipment, multiple air-conditioning systems, and additional servers—in the hope that there will always be sufficient backup power, cooling, and computing capacity in case user demand spikes or a problem occurs with one system or component. Although data centers do require some staff to operate and maintain the facility and processes, the number of occupants in a typical facility is relatively small compared with the square footage dedicated to IT equipment. The bulk of the energy consumed by data centers goes to powering and cooling their servers.

Establishing Metrics

One of the industry's early challenges in developing greener data centers was to come to a consensus on what it would measure to track success. In 2010, under the auspices of the Green Grid, an industry association formed in 2007 to improve resource efficiency within the IT field, an agreement was made to use a ratio called power-usage effectiveness (PUE), which is determined by dividing the total power used in both computing equipment and building infrastructure by the power used in the computing equipment alone. According to the Green Grid, the ideal PUE is 1.0, which would mean all energy used by a data center goes into powering the IT equipment rather than the building.

The average PUE for conventional facilities is currently about 2. In other words, the building that houses the IT equipment consumes the same amount of energy used by the data-processing equipment itself. “Some are much worse, like 3 or 4,” says Dale Sartor, a member of LBNL's building-technology and urban-systems department. “The best projects are those with a PUE less than 1.1,” he continues. According to Sartor, organizations like eBay, Google, Yahoo!, and Facebook have created the “icons” of high-efficiency projects: “They push the envelope in both the IT equipment and infrastructure side—and the gray area in between.”

|

|

YAHOO! has a state-of-the-art data center in Lockport, New York, featuring several long, heavily ventilated sheds to encourage natural airflow. Less than 1 percent of the building‘s energy consumption is needed to cool the facility, which has been aptly nicknamed the “Yahoo! Chicken Coop.”

Photo courtesy Yahoo!

|

While PUE has become the accepted metric, it does not tell the whole sustainable story. It doesn't measure the energy efficiency of the servers themselves in accomplishing the desired action—be it a delivered email, a downloaded movie, or a financial transaction. It also does not differentiate between renewable and nonrenewable energy sources. And it gives no credit for projects that reuse the servers' waste heat for other purposes beyond the data center itself, such as heating habitable spaces in a neighboring building.

“There is an ongoing debate about measuring performance,” says Josh Hatch, director of sustainability analytics at Brightworks, a company based in Portland, Oregon, that has helped Facebook and other businesses incorporate sustainable practices. “Some people see PUE as a very successful metric, but others still see it as incomplete.” Although PUE is the most commonly referenced metric, others have been developed—not only for energy use but also for water efficiency and carbon footprint—to try to get a better handle on the full environmental impact of this building type.

Power Points

In conventional data centers, energy is wasted from the start, as it travels from the utility grid to the servers, because the electricity must be converted back and forth from alternating current to direct current and stepped up and down in voltage multiple times as it moves through the UPS, PDU, and various components within the IT equipment itself. Power is lost at each of these junctures. For its high-performance data center in Prineville, Oregon, which opened in 2011, Facebook customized power-supply equipment to eliminate the need for so many conversions. Meanwhile, researchers at LBNL are working with various manufacturers to create standardized products that would reduce the number of power-conversion requirements for the industry as a whole.

Energy waste in conventional data centers is also due to unnecessary redundancies and the inefficient use of servers: Multiple servers are typically kept running on standby just in case a portion of their computing capacity may be needed occasionally. So, in addition to purchasing the most efficient servers for their needs, IT staff must implement appropriate data-management strategies to minimize the total number of servers in operation.

|

|

Chart by David Foster

|

Instead of assuming that all data must be treated equally, for example, energy-conscious data centers evaluate the actual level of reliability needed for the type of data being processed. By limiting the highest level of protection to the most sensitive types of data, IT staff can reduce the number of servers sitting idle, and the associated power and cooling systems that would otherwise be needed to keep these backup servers running.

In addition, all data centers can benefit from server-virtualization software, which allows different applications to be handled on one physical server without interfering with each other. By running multiple functions on a single piece of equipment, data centers can increase the utilization rate of servers from about 10 percent to more than 60 percent, according to Sartor. This can significantly reduce the number of servers that must be kept on standby day in and day out.

Virtualization also eliminates the problem of abandoned data, because if data does not have to be assigned to a dedicated server, it does not contribute to the energy drain when not being used. And it improves reliability without adding to energy costs because it allows applications to move from server to server: “If one server fails, you can move to another server. In fact, if an entire data center fails, you can move to another data center,” says Sartor.

Cool Concepts

In a conventional data center, most of the energy consumed by the building infrastructure itself goes to conditioning the spaces that house the servers. Like all electronic equipment, computer servers generate significant amounts of heat. “If it gets too hot,” says Hatch, “the server can burn out.” In addition, according to Green Tips for Data Centers—the tenth book in a series by ASHRAE Technical Committee 9.9, which focuses on mission-critical facilities, technology spaces, and electronic equipment—condensation from excess humidity can cause corrosion or data-transmission errors, while electrostatic discharge due to too little humidity can damage equipment or corrupt data.

To minimize energy waste, data-center designers and operators must carefully contain and control air that has been conditioned for the servers. For example, incoming air meant to cool the servers must not be allowed to mix with the hot air exhausted from them. Current guidelines recommend organizing racks in alternating rows so server fronts face server fronts and server backs face server backs. The path running between the front (or air intake) sides of the servers is referred to as the “cold aisle,” and the path between the back (or air exhaust) sides is the “hot aisle.” The coolest air is brought into the server room—ideally through underfloor ducts—into the cold aisles, from where it is drawn into the servers by fans embedded within the IT equipment. Once it absorbs the heat of the servers, the air is blown out the back of the equipment into the hot aisle, from where it should be exhausted from the data hall before it has a chance to mix with incoming cold air. Physical barriers—like plastic sheeting—should be installed over empty slots in racks to prevent hotter air from seeping back into the cold aisle.

|

|

NREL’s Energy Systems Integration Facility in Golden, Colorado, is expected to be completed later this year.

Image courtesy of Integral Group

|

Air management can be particularly problematic in older computer rooms that rely on multiple computer-room air-conditioning units fitted with internal humidifiers. If not calibrated together, these stand-alone machines, which are located within the computer room and cool with dedicated compressors and refrigerant cooling coils rather than chilled-water coils, can start working against each other—one is humidifying the room while an adjacent one is dehumidifying. Current ASHRAE guidelines recommend that only one unit should handle humidity levels—both dehumidification and humidification functions should be removed from all the others—to avoid this common problem. Monitoring and control systems also play an important role in conserving energy. Traditionally, control systems were based on the temperature of the return air, but current guidelines suggest placing sensors near the server's air intake, which is where the desired temperature is most critical.

Undoubtedly, the most significant change in data-center air management in recent years has been the industry's re-evaluation of acceptable environmental conditions. For years, IT professionals insisted that all computer rooms remain between “68 and 70 degrees Fahrenheit with a relative humidity between 40 and 60 percent,” says Paul Bonaro, who manages the Yahoo! data center in Lockport, New York. These ranges, however, were never backed by any industrywide, research-based consensus. The ASHRAE Technical Committee 9.9 was formed in 2003 as an outgrowth of an industry effort to establish such specifications. In 2004 it published its first edition of Thermal Guidelines for Data Processing Environments, which provided recommended and allowable temperature and humidity ranges for two classes of data centers—categorized according to the needed level of reliability—based on input from major IT-equipment manufacturers. The temperature and humidity ranges were broadened in a second edition, which came out in 2008, to reflect growing concern over energy costs. These ranges were increased again and the number of data-center classes expanded to four—reflecting a more nuanced understanding of reliability among different centers—in the third edition, which came out in 2011.

According to the most recent guidelines, a data center requiring the lowest level of reliability could, on occasion, have a server-intake temperature as high as 113°F (assuming the relative humidity is in the 10 to 30 percent range) or relative humidity levels as high as 90 percent (assuming the temperature does not go above 75°F). Meanwhile, testing at LBNL and elsewhere has demonstrated that servers can function perfectly well with outside air that has been properly filtered. The more relaxed requirements have given designers a great deal more leeway in terms of how computer spaces can be conditioned with less energy. At the very least, raising the acceptable room temperature in server rooms means that the traditional mechanical systems used to supply conditioned air to data centers do not have to generate as much cooling. Furthermore, in areas where the outdoor temperatures regularly dip below 75°F or so for some periods, these systems can be designed for air-side economizers. In this scenario, the compressor temporarily shuts off while the cool outside air is pulled into the mechanical system and directed to the computer room for “free” or “compressor-less” cooling. Several industry leaders—including Facebook and Yahoo!—have gone further: eliminating conventional mechanical systems completely and relying instead on a combination of outdoor air and evaporative cooling techniques to maintain proper interior temperatures and humidity levels year-round.

|

|

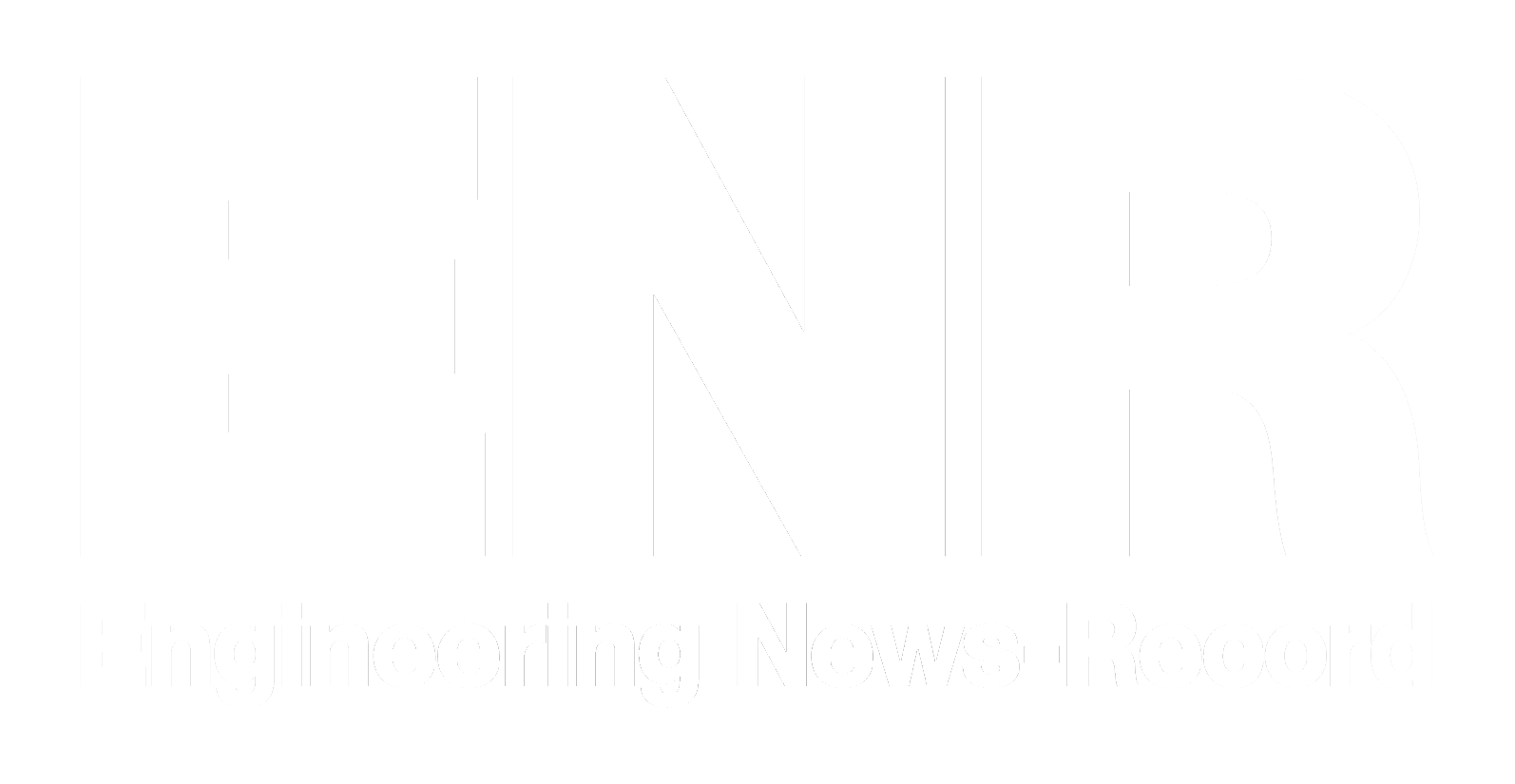

Servers at NREL’s highly efficient data center will be cooled year-round with water maintained at 75 °F by cooling towers. Waste heat from servers will condition both offices and lab spaces. Mechanical system designed by Integral Group.

Image courtesy Integral Group

|

Data centers are also experimenting with using water for cooling. Water is a much more efficient medium than air for heat transfer, because it absorbs and releases heat more easily and can transport a greater amount of thermal energy within a smaller volume. To reduce reliance on the mechanical cooling of water, some innovative data centers are going to water-side economizers, in which the outside environment cools the water to the desired temperature: Cooling towers can remove excess heat from water through evaporation, or cool water can be pulled from nearby rivers, lakes, seas, and even sewers—and cleaned up, if necessary, before being returned to its source.

|

|

eBAY is planning to use Bloom Energy fuel cells to power its new data center in South Jordan, Utah.

Photo courtesy Bloom Energy

|

The cool water from the outside can be routed through more conventional chilled-water mechanical systems that rely on air handlers to deliver cooling to the actual data halls, or it can be brought closer to the servers themselves. Although there is some concern within the industry regarding bringing water into an area densely filled with electronic equipment, strategies have been—and continue to be—developed to do this in as safe a manner as possible, because the closer the cool water is brought to the server, the more efficient the system.

Clearly, there is no one magic bullet to lower the energy use of data centers, but a whole host of interlinking strategies to consider. Fortunately, the industry has at least begun the transition. According to a recent comment from the EPA, “We have seen data center operators making great strides in their efforts to reduce energy use in their facilities.” And the agency believes the industry can continue to achieve significant energy and cost savings: “Based on the latest available data, improving the energy efficiency of America's data centers by just 10 percent would save more than 6 billion kilowatt-hours each year, enough to power more than 350,000 homes and save more than $450 million annually.”